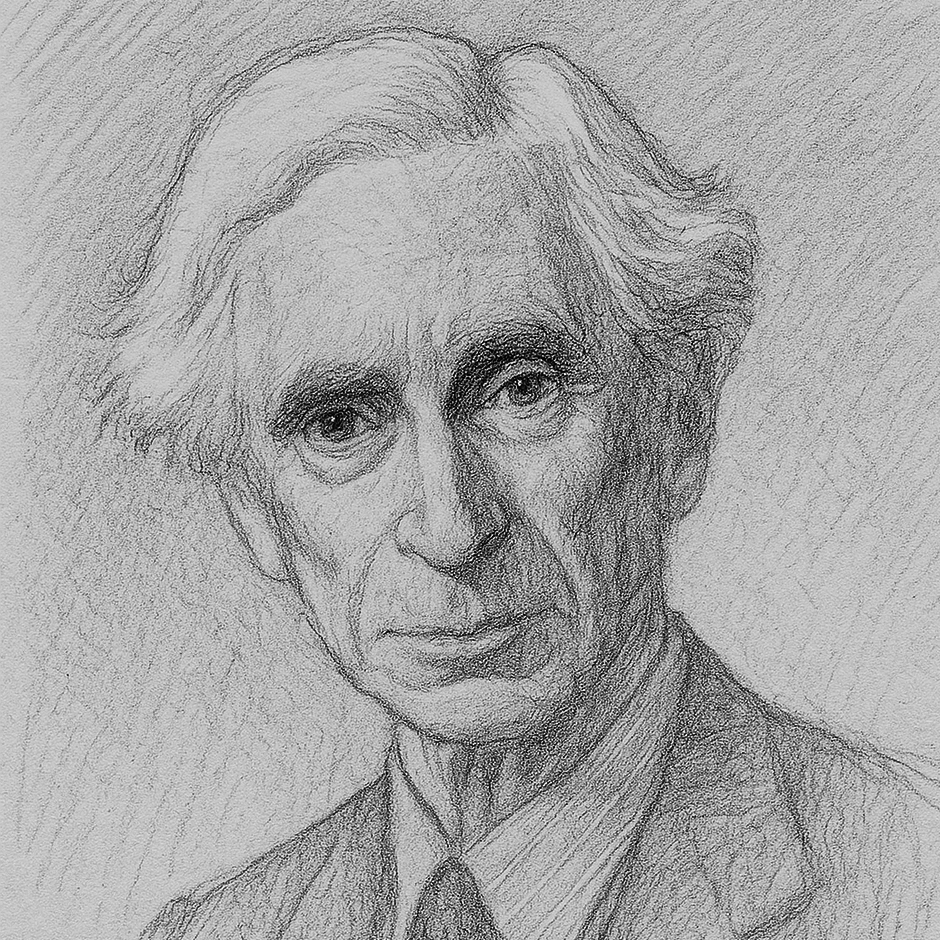

Bertrand Russell

1872-1970

British logician, mathematician and Nobel laureate, known for analytic philosophy

It is undesirable to believe a proposition when there is no ground whatever for supposing it true.

The Human Validator

Separate fact from fiction and shield against misinformation and deepfakes

Why this matters: Generative AI’s output can have a confident tone that masks unfounded or outdated information and missing voices. It can include misinformation, deepfakes or complete hallucinations. Be a detective. Before you build on an AI answer, run a credibility check. With an attitude of skepticism, you’ll quickly catch many errors and know when deeper verification is needed. This habit protects your work, your reputation and the people who will act on or be impacted by your conclusions.

Mini-tool: The Evidence Protocol

Consider yourself a forensic investigator. Generative AI is a probability engine, not a truth engine. Treat every output like testimony from a confident but unreliable witness who is known to fabricate details to please the interrogator. Evidence must be bagged, tagged, and verified before it can be admitted into the “court” of your work.

Try it

Create an investigator’s log for your work with AI following these steps:

- Determine the source. Track the chain of custody by clicking through links. No source? Tag it: “Origin unknown – unreliable.”

- Check the dates. Is this a fresh piece of evidence, or is it possibly no longer relevant?

- Cross-reference the claim with other known, reputable sources (e.g., academic journals, Google Scholar or established news organizations).

- Search for what’s not being said. Seek out opposing views to see if the “evidence” holds up under cross-examination. Look for signs of deepfakes or manipulation.

- Recompute any numbers. Find the original source for any quotes. Tiny details often reveal big lies.

- Create a detailed log of your work including the role of AI.